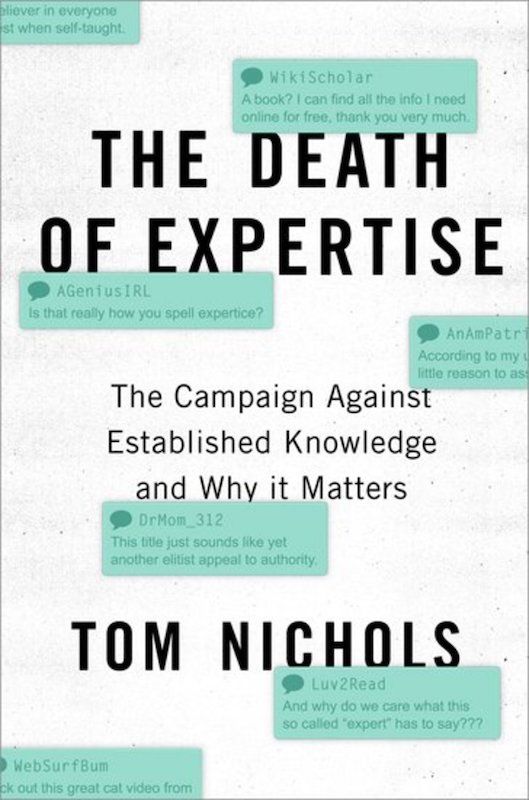

The Death of Expertise: The Campaign Against Established Knowledge and Why it Matters

A timely but excessively cranky book. Nichols' "The Death of Expertise" rails against the public's rejection of expert authority. Nichols' goal was to present the strongest possible case for his point of view - namely that democratic "equality", sloppy news-ertainment, coddled university students, and universal internet access have created a society that increasingly relies on feelings rather than facts to make major decision.

There are many aspects of his critique that hit home. Yale is actually singled out for criticism in Nichols' section on "Higher Education", with references to both the Silliman screamer and the revolt against the Major English Poets course. He points out the "bizarre paradox in which college students are demanding to run the school while at the same time insisting that they be treated as children" and quite correctly writes that:

When feelings matter more than rationality or facts, education is a doomed enterprise. Emotion is an unassailable defense against expertise.

Another fun Yale connection is that Nichols' cites David Broockman (Yale '11) and Josh Kalla's (Yale '13) takedown of LaCour and Green's "An Experiment on Transmission of Support for Gay Equality".

But there were other parts of the book that I thought were quite unfair. Summoning his best crotchety old professor voice, Nichols inveighs against the false confidence of those who teach themselves without professional oversight. But it's never clear to me why he so disdains autodidacts. He places a high premium on experience, but as I read the book I was constantly reminded of Otto von Bismarck's famous quote, "Fools learn from experience. I prefer to learn from the experience of others."

While making his case for the importance of experts, Nichols distinctly fails to acknowledge major recent "expert" failures like the chemical weapons justification for invading Iraq or the 2008 financial crash. Going back to Vietnam, the long history of "experts" making poor decisions - particularly in policy circles - probably goes a long way in explaining the public's hesitance to accept their word at face value. But this facet of the issue is ignored in Nichols' book.

Furthermore, his chapter on journalism wasn't nearly as strong as its counterpart in "The War on Science". The most interesting parts were his warnings to experts to be responsible about what they say to the media.

This is a book that came out at the right time, lands a few punches, but ultimately feels incomplete and unfairly pessimistic.

My highlights below:

PREFACE

The United States is now a country obsessed with the worship of its own ignorance.

To reject the advice of experts is to assert autonomy, a way for Americans to insulate their increasingly fragile egos from ever being told they’re wrong about anything. It is a new Declaration of Independence: no longer do we hold these truths to be self-evident, we hold all truths to be self-evident, even the ones that aren’t true. All things are knowable and every opinion on any subject is as good as any other.

But there is a self-righteousness and fury to this new rejection of expertise that suggest, at least to me, that this isn’t just mistrust or questioning or the pursuit of alternatives: it is narcissism, coupled to a disdain for expertise as some sort of exercise in self-actualization.

More than anything, I hope this work contributes to bridging the rift between experts and laypeople that in the long run threatens not only the well-being of millions of Americans, but also the survival of our democratic experiment.

Introduction - The Death of Expertise

There is a cult of ignorance in the United States, and there always has been. The strain of anti-intellectualism has been a constant thread winding its way through our political and cultural life, nurtured by the false notion that democracy means that “my ignorance is just as good as your knowledge.” Isaac Asimov

These are dangerous times. Never have so many people had so much access to so much knowledge and yet have been so resistant to learning anything.

Not only do increasing numbers of laypeople lack basic knowledge, they reject fundamental rules of evidence and refuse to learn how to make a logical argument. In doing so, they risk throwing away centuries of accumulated knowledge and undermining the practices and habits that allow us to develop new knowledge.

On the other hand, many experts, and particularly those in the academy, have abandoned their duty to engage with the public. They have retreated into jargon and irrelevance, preferring to interact with each other only. Meanwhile, the people holding the middle ground to whom we often refer as “public intellectuals” — I’d like to think I’m one of them—are becoming as frustrated and polarized as the rest of society.

The death of expertise is not just a rejection of existing knowledge. It is fundamentally a rejection of science and dispassionate rationality, which are the foundations of modern civilization.

Universal education, the greater empowerment of women and minorities, the growth of a middle class, and increased social mobility all threw a minority of experts and the majority of citizens into direct contact, after nearly two centuries in which they rarely had to interact with each other. And yet the result has not been a greater respect for knowledge, but the growth of an irrational conviction among Americans that everyone is as smart as everyone else. This is the opposite of education, which should aim to make people, no matter how smart or accomplished they are, learners for the rest of their lives. Rather, we now live in a society where the acquisition of even a little learning is the endpoint, rather than the beginning, of education. And this is a dangerous thing.

When students become valued clients instead of learners, they gain a great deal of self-esteem, but precious little knowledge; worse, they do not develop the habits of critical thinking that would allow them to continue to learn and to evaluate the kinds of complex issues on which they will have to deliberate and vote as citizens.

LOCATION: 245

In a free society, journalists are, or should be, among the most important referees in the great scrum between ignorance and learning. And what happens when citizens demand to be entertained instead of informed?

This does not mean that every American must engage in deep study of policy, but if citizens do not bother to gain basic literacy in the issues that affect their lives, they abdicate control over those issues whether they like it or not. And when voters lose control of these important decisions, they risk the hijacking of their democracy by ignorant demagogues, or the more quiet and gradual decay of their democratic institutions into authoritarian technocracy.

1 - Experts and Citizens

The public space is increasingly dominated by a loose assortment of poorly informed people, many of them autodidacts who are disdainful of formal education and dismissive of experience. “If experience is necessary for being president,” the cartoonist and author Scott Adams tweeted during the 2016 election, “name a political topic I can’t master in one hour under the tutelage of top experts,” as though a discussion with an expert is like copying information from one computer drive to another. A kind of intellectual Gresham’s Law is gathering momentum: where once the rule was “bad money drives out good,” we now live in an age where misinformation pushes aside knowledge.

In the early 1970s, the science fiction writer Robert Heinlein issued a dictum, often quoted since, that “specialization is for insects.” Truly capable human beings, he wrote, should be able to do almost anything from changing a diaper to commanding a warship. It is a noble sentiment that celebrates human adaptability and resilience, but it’s wrong. While there was once a time when every homesteader lumbered his own trees and built his own house, this not only was inefficient, but produced only rudimentary housing. [NOTE: This seems like a very unfair interpretation. Ignores computers and the internet]

The fact of the matter is that we cannot function without admitting the limits of our knowledge and trusting in the expertise of others.

The issue is not indifference to established knowledge; it’s the emergence of a positive hostility to such knowledge. This is new in American culture, and it represents the aggressive replacement of expert views or established knowledge with the insistence that every opinion on any matter is as good as every other. This is a remarkable change in our public discourse.

In the case of vaccines, for example, low rates of participation in child vaccination programs are actually not a problem among small-town mothers with little schooling. Those mothers have to accept vaccinations for their kids because of the requirements in the public schools. The parents more likely to resist vaccines, as it turns out, are found among educated San Francisco suburbanites in Marin County. While these mothers and fathers are not doctors, they are educated just enough to believe they have the background to challenge established medical science. Thus, in a counterintuitive irony, educated parents are actually making worse decisions than those with far less schooling, and they are putting everyone’s children at risk.

At the root of all this is an inability among laypeople to understand that experts being wrong on occasion about certain issues is not the same thing as experts being wrong consistently on everything.

Put another way, experts are the people who know considerably more on a subject than the rest of us, and are those to whom we turn when we need advice, education, or solutions in a particular area of human knowledge. Note that this does not mean that experts know all there is to know about something. Rather, it means that experts in any given subject are, by their nature, a minority whose views are more likely to be “authoritative” — that is, correct or accurate — than anyone else’s.

True expertise, the kind of knowledge on which others rely, is an intangible but recognizable combination of education, talent, experience, and peer affirmation.

Experience and professional affirmation matter, but it’s also true that there is considerable wisdom in the old Chinese warning to beware a craftsman who claims twenty years of experience when in reality he’s only had one year of experience twenty times.

Second, and related to this point about relative skill, experts will make mistakes, but they are far less likely to make mistakes than a layperson. This is a crucial distinction between experts and everyone else, in that experts know better than anyone the pitfalls of their own profession. As the renowned physicist Werner Heisenberg once put it, an expert “is someone who knows some of the worst mistakes that can be made in his subject and how to avoid them.” (His fellow physicist Niels Bohr had a different take: “An expert is someone who has made all the mistakes which can be made in a very narrow field.”)

Knowing things is not the same as understanding them. Comprehension is not the same thing as analysis. Expertise is a not a parlor game played with factoids.

2 - How Conversation Became Exhausting

Yeah, well, you know, that’s just, like, your opinion, man. “The Dude,” The Big Lebowski

The Dunning-Kruger Effect, in sum, means that the dumber you are, the more confident you are that you’re not actually dumb.

Other than in fields like athletic competition, where incompetence is manifest and undeniable, it’s normal for people to avoid saying they’re bad at something. [NOTE: Importance of sports]

As it turns out, however, the more specific reason that unskilled or incompetent people overestimate their abilities far more than others is because they lack a key skill called “metacognition.” This is the ability to know when you’re not good at something by stepping back, looking at what you’re doing, and then realizing that you’re doing it wrong.

People simply do not understand numbers, risk, or probability, and few things can make discussion between experts and laypeople more frustrating than this “innumeracy,” as the mathematician John Allen Paulos memorably called it.

This is how, for example, a 2014 study of public attitudes about gay marriage went terribly wrong. A graduate student claimed he’d found statistically unassailable proof that if opponents of gay marriage talked about the issue with someone who was actually gay, they were likelier to change their minds. His findings were endorsed by a senior faculty member at Columbia University who had signed on as a coauthor of the study. It was a remarkable finding that basically amounted to proof that reasonable people can actually be talked out of homophobia. The only problem was that the ambitious young researcher had falsified the data. The discussions he claimed he was analyzing never took place. When others outside the study reviewed it and raised alarms, the Columbia professor pulled the article. The student, who was about to start work on a bright future as a faculty member at Princeton, found himself out of a job.

Thus, confirmation bias makes attempts at reasoned argument exhausting because it produces arguments and theories that are nonfalsifiable. It is the nature of confirmation bias itself to dismiss all contradictory evidence as irrelevant, and so my evidence is always the rule, your evidence is always a mistake or an exception. It’s impossible to argue with this kind of explanation, because by definition it’s never wrong.\

Cause and effect, the nature of evidence, and statistical frequency are far more intricate than common sense can handle.

Conspiracy theories are also a way for people to give context and meaning to events that frighten them. Without a coherent explanation for why terrible things happen to innocent people, they would have to accept such occurrences as nothing more than the random cruelty either of an uncaring universe or an incomprehensible deity.

Conspiracy theories and the flawed reasoning behind them, as the Canadian writer Jonathan Kay has noted, become especially seductive “in any society that has suffered an epic, collectively felt trauma. In the aftermath, millions of people find themselves casting about for an answer to the ancient question of why bad things happen to good people.”

A 2015 study, for example, tested the reactions of both liberals and conservatives to certain kinds of news stories, and it found that “just as conservatives discount the scientific theories that run counter to their worldview, liberals will do exactly the same.”

3 - Higher Education

College is no longer a time devoted to learning and personal maturation; instead, the stampede of young Americans into college and the consequent competition for their tuition dollars have produced a consumer-oriented experience in which students learn, above all else, that the customer is always right.

Colleges now are marketed like multiyear vacation packages, rather than as a contract with an institution and its faculty for a course of educational study. This commodification of the college experience itself as a product is not only destroying the value of college degrees but is also undermining confidence among ordinary Americans that college means anything.

Part of the problem is that there are too many students, a fair number of whom simply don’t belong in college. The new culture of education in the United States is that everyone should, and must, go to college.

A college degree, whether in physics or philosophy, is supposed to be the mark of a truly “educated” person who not only has command of a particular subject, but also has a wider understanding of his or her own culture and history. It’s not supposed to be easy.

Today, professors do not instruct their students; instead, the students instruct their professors, with an authority that comes naturally to them. A group of Yale students in 2016, for example, demanded that the English department abolish its Major English Poets course because it was too larded with white European males: “We have spoken,” they said in a petition. “We are speaking. Pay attention.” As a professor at an elite school once said to me, “Some days, I feel less like a teacher and more like a clerk in an expensive boutique.”

Some educators even repeat the old saw that “I learn as much from my students as they learn from me!” (With due respect to my colleagues in the teaching profession who use this expression, I am compelled to say: if that’s true, then you’re not a very good teacher.)

The solution to this reversal of roles in the classroom is for teachers to reassert their authority.

It was probably inevitable that the anti-intellectualism of American life would invade college campuses, but that is no reason to surrender to it. And make no mistake: campuses in the United States are increasingly surrendering their intellectual authority not only to children, but also to activists who are directly attacking the traditions of free inquiry that scholarly communities are supposed to defend.

When feelings matter more than rationality or facts, education is a doomed enterprise. Emotion is an unassailable defense against expertise, a moat of anger and resentment in which reason and knowledge quickly drown. And when students learn that emotion trumps everything else, it is a lesson they will take with them for the rest of their lives.

Quietly, the professor said, “No, I don’t agree with that,” and the student unloaded on him: “Then why the [expletive] did you accept the position?! Who the [expletive] hired you?! You should step down! If that is what you think about being a master you should step down! It is not about creating an intellectual space! It is not! Do you understand that? It’s about creating a home here. You are not doing that!” [emphasis added] Yale, instead of disciplining students in violation of their own norms of academic discourse, apologized to the tantrum throwers. The house master eventually resigned from his residential post, while staying on as a faculty member. His wife, however, resigned her faculty position and left college teaching entirely. To faculty everywhere, the lesson was obvious: the campus of a top university is not a place for intellectual exploration. It is a luxury home, rented for four to six years, nine months at a time, by children of the elite who may shout at faculty as if they’re berating clumsy maids in a colonial mansion.

point to the bizarre paradox in which college students are demanding to run the school while at the same time insisting that they be treated as children.

4 - Let Me Google That for You

Ask any professional or expert about the death of expertise, and most of them will immediately blame the same culprit: the Internet.

The deeply entrenched and usually immutable views of Internet users are the foundation of Pommer’s Law, in which the Internet can only change a person’s mind from having no opinion to having a wrong opinion. There are many others, including my personal favorite, Skitt’s Law: “Any Internet message correcting an error in another post will contain at least one error itself.”

In the early 1950s, highbrow critics derided the quality of popular literature, particularly American science fiction. They considered sci-fi and fantasy writing a literary ghetto, and almost all of it, they sniffed, was worthless. Sturgeon angrily responded by noting that the critics were setting too high a bar. Most products in most fields, he argued, are of low quality, including what was then considered serious writing. “Ninety percent of everything,” Sturgeon decreed, “is crap.”

It’s an old saying, but it’s true: it ain’t what you don’t know that’ll hurt you, it’s what you do know that ain’t so.

As the comedian John Oliver has complained, you don’t need to gather opinions on a fact: “You might as well have a poll asking: ‘Which number is bigger, 15 or 5?’ or ‘Do owls exist?’ or ‘Are there hats?’

5 - The “New” New Journalism, and Lots of It

A 2011 University of Chicago study found that America’s college graduates “failed to make significant gains in critical thinking and complex reasoning during their four years of college,” but more worrisome, they “also failed to develop dispositions associated with civic engagement.”

How can people be more resistant to facts and knowledge in a world where they are constantly barraged with facts and knowledge? The short answer where journalism is concerned — in an explanation that could be applied to many modern innovations — is that technology collided with capitalism and gave people what they wanted, even when it wasn’t good for them.

The rise of talk radio challenged the role of experts by reinforcing the popular belief that the established media were dishonest and unreliable. Radio talkers didn’t just attack established political beliefs: they attacked everything, plunging their listeners into an alternate universe where facts of any kind were unreliable unless verified by the host. In 2011, Limbaugh referred to “government, academia, science, and the media” as the “four corners of deceit,” which pretty much covered everyone except Limbaugh.

There is no way to discuss the nexus between journalism and the death of expertise without considering the revolutionary change represented by the arrival of Fox News in 1996. The creation of the conservative media consultant Roger Ailes, Fox made the news faster, slicker, and, with the addition of news readers who were actual beauty queens, prettier. It’s an American success story, in every good and bad way that such triumphs of marketing often are. (Ailes, in what seems almost like a made-for-television coda to his career, was forced out of Fox in 2016 after multiple allegations of sexual harassment were covered in great detail on the medium he helped create.)

As the conservative author and Fox commentator Charles Krauthammer likes to quip, Ailes “discovered a niche audience: half the American people.”

Saletan spent a year researching the food safety of genetically modified organisms (GMOs), a story that might exceed even the vaccine debate for the triumph of ignorance over science. “You can’t ask a young person to sort out this issue on the kind of time frame that’s generally tolerated these days,” Saletan said after his story — which blew apart the fake science behind the objections to GMOs — appeared in Slate.

In the great “chocolate helps you lose weight” hoax, for example, the hoaxers never thought they’d get as far as they did; they assumed that “reporters who don’t have science chops” would discover the whole faked study was “laughably flimsy” once they reached out to a real scientist. They were wrong: nobody actually tried to vet the story with actual scientists. “The key,” as the hoaxers later said, “is to exploit journalists’ incredible laziness. If you lay out the information just right, you can shape the story that emerges in the media almost like you were writing those stories yourself. In fact, that’s literally what you’re doing, since many reporters just copied and pasted our text.”

To experts, I will say, know when to say no. Some of the worst mistakes I ever made were when I was young and I could not resist giving an opinion. Most of the time, I was right to think I knew more than the reporter or the readers, but that’s not the point: I also found myself out on a few limbs I should have avoided.

The consumers of news have some important obligations here as well. I have four recommendations for you, the readers, when approaching the news: be humbler, be ecumenical, be less cynical, and be a lot more discriminating.

6 - When the Experts Are Wrong

"Even when the experts all agree, they may well be mistaken." -Bertrand Russell

In the 1970s, America’s top nutritional scientists told the United States government that eggs, among many other foods, might be lethal. There could be no simpler application of Occam’s Razor, with a trail leading from the barnyard to the morgue. Eggs contain a lot of cholesterol, cholesterol clogs arteries, clogged arteries cause heart attacks, and heart attacks kill people. The conclusion was obvious: Americans need to get all that cholesterol out of their diet. And so they did. Then something unexpected happened: Americans gained a lot of weight and started dying of other things. Eggs, it turned out, weren’t so bad, or at least they weren’t as bad as other things. In 2015 the government decided that eggs were acceptable, perhaps even healthy.

As the historian Nick Gvosdev observed some years later, many Soviet experts substituted what they believed, or wanted to believe, about the USSR in place of “critical analysis of the facts on the ground.” Two scholars of international relations noted that everyone else got it wrong, too. “Measured by its own standards, the [academic] profession’s performance was embarrassing,” Professors Richard Ned Lebow and Thomas Risse Kappen wrote in 1995. “None of the existing theories of international relations recognized the possibility that the kind of change that did occur could occur.”

For these larger decisions, there are no licenses or certificates. There are no fines or suspensions if things go wrong. Indeed, there is very little direct accountability at all, which is why laypeople understandably fear the influence of experts.

What can citizens do when they are confronted with expert failure, and how can they maintain their trust in expert communities?

Science subjects itself to constant testing by a set of careful rules under which theories can only be displaced by better theories.

Yet another problem is when experts stay in their lane but then try to move from explanation to prediction. While the emphasis on prediction violates a basic rule of science — whose task is to explain, rather than to predict — society as a client demands far more prediction than explanation. Worse, laypeople tend to regard failures of prediction as indications of the worthlessness of expertise.

For example, Peter Duesberg, one of the leading AIDS denialists, remains at Berkeley despite accusations from critics that he engaged in academic misconduct, charges against him that his university investigated and dismissed in 2010.

As the New York Times reported in 2015, a “painstaking” effort to reproduce 100 studies published in three leading psychology journals found that more than half of the findings did not hold up when retested.

It is possible to fake one study. To fake hundreds and thus produce a completely fraudulent or dangerous result is another matter entirely.

What fraud does in any field, however, is to waste time and to delay progress.

As society has become more complex, however, the idea of geniuses who can hit to any and all fields makes less sense: “Benjamin Franklin,” the humorist Alexandra Petri once wrote, “was one of the last men up to whom you could go and say, ‘You invented a stove. What do you think we should do about these taxes?’ and get a coherent answer.”

The Nobel Prize–winning chemist Linus Pauling, for example, became convinced in the 1970s that Vitamin C was a wonder drug. He advocated taking mega-doses of the supplement to ward off the common cold and any number of other ailments. There was no actual evidence for Pauling’s claims, but Pauling had a Nobel in chemistry, and so his conclusions about the effect of vitamins seemed to many people to be a reasonable extension of his expertise.

Pauling, in the end, hurt not only his own reputation but also the health of potentially millions of people. As Offit put it, a “man who was so spectacularly right that he won two Nobel Prizes” was “so spectacularly wrong that he was arguably the world’s greatest quack.”

Unfortunately, when experts are asked for views outside their competence, few are humble enough to remember their responsibility to demur.

Prediction is a problem for experts. It’s what the public wants, but experts usually aren’t very good at it. This is because they’re not supposed to be good at it; the purpose of science is to explain, not to predict. And yet predictions, like cross-expertise transgressions, are catnip to experts.

What Tetlock actually found was not that experts were no better than random guessers, but that certain kinds of experts seemed better at applying knowledge to hypotheticals than their colleagues. Tetlock used the British thinker Isaiah Berlin’s distinction between “hedgehogs” and “foxes” to distinguish between experts whose knowledge was wide and inclusive (“the fox knows many things”) from those whose expertise is narrow and deep (“the hedgehog knows but one”). Tetlock’s study is one of the most important works ever written on how experts think, and it deserves a full reading. In general, however, one of his more intriguing findings can be summarized by noting that while experts ran into trouble when trying to move from explanation to prediction, the “foxes” generally outperformed the “hedgehogs,” for many reasons.

There’s an old joke about a British civil servant who retired after a long career in the Foreign Office spanning most of the twentieth century. “Every morning,” the experienced diplomatic hand said, “I went to the Prime Minister and assured him there would be no world war today. And I am pleased to note that in a career of 40 years, I was only wrong twice.”

Both experts and laypeople have responsibilities when it comes to expert failure. Professionals must own their mistakes, air them publicly, and show the steps they are taking to correct them. Laypeople, for their part, must exercise more caution in asking experts to prognosticate, and they must educate themselves about the difference between failure and fraud.

Tetlock, for one, has advocated looking closely at the records of pundits and experts as a way of forcing them to get better at giving advice, so that they will have “incentives to compete by improving the epistemic (truth) value of their products, not just by pandering to communities of co-believers.”

As the philosopher Bertrand Russell wrote in a 1928 essay, laypeople must evaluate expert claims by exercising their own careful logic as well. The skepticism that I advocate amounts only to this: (1) that when the experts are agreed, the opposite opinion cannot be held to be certain; (2) that when they are not agreed, no opinion can be regarded as certain by a non-expert; and (3) that when they all hold that no sufficient grounds for a positive opinion exist, the ordinary man would do well to suspend his judgment.

If laypeople refuse to take their duties as citizens seriously, and do not educate themselves about issues important to them, democracy will mutate into technocracy. The rule of experts, so feared by laypeople, will grow by default.

Conclusion - Experts and Democracy

Trump’s eventual victory, however, was also undeniably one of the most recent — and one of the loudest — trumpets sounding the impending death of expertise.

What Rhodes said, however, was different, and far more damaging to the relationship between experts and public policy. In effect, he bragged that the deal with Iran was sold by warping the debate among the experts themselves, and by taking advantage of the fact that the new media, and especially the younger journalists now taking over national reporting, wouldn’t know any better. “The average reporter we talk to is 27 years old, and their only reporting experience consists of being around political campaigns,” Rhodes said. “That’s a sea change. They literally know nothing.”

The relationship between experts and citizens, like almost all relationships in a democracy, is built on trust. When that trust collapses, experts and laypeople become warring factions. And when that happens, democracy itself can enter a death spiral that presents an immediate danger of decay either into rule by the mob or toward elitist technocracy. Both are authoritarian outcomes, and both threaten the United States today.

The abysmal literacy, both political and general, of the American public is the foundation for all of these problems.

In the absence of informed citizens, for example, more knowledgeable administrative and intellectual elites do in fact take over the daily direction of the state and society. In a passage often cited by Western conservatives and especially loved by American libertarians, the Austrian economist F. A. Hayek wrote in 1960: “The greatest danger to liberty today comes from the men who are most needed and most powerful in modern government, namely, the efficient expert administrators exclusively concerned with what they regard as the public good.”

In his seminal work on expertise, however, Philip Tetlock suggests other ways in which experts might be held more accountable without merely trashing the entire relationship between experts and the public. There are many possibilities, including more transparency and competition, in which experts in any field have to maintain a record of their work, come clean about how often they were right or wrong, and actually have journals, universities, and other gatekeepers hold their peers responsible more often for mistakes.

To ignore expert advice is simply not a realistic option, not only due to the complexity of policymaking, but because to do so is to absolve citizens of their responsibilities to learn about issues that matter directly to their own well-being. Moreover, when the public no longer makes a distinction between experts and policymakers and merely wants to blame everyone in the policy world for outcomes that distress them, the eventual result will not be better policy but more politicization of expertise. Politicians will never stop relying on experts; they will, however, move to relying on experts who will tell them — and the angry laypeople banging on their office doors—whatever it is they want to hear.

In 1787, Benjamin Franklin was supposedly asked what would emerge from the Constitutional Convention being held in Philadelphia. “A republic,” Franklin answered, “if you can keep it.” Today, the bigger challenge is to find anyone who knows what a republic actually is.

In the words of Ilya Somin, “When we elect government officials based on ignorance, they rule over not only those who voted for them but all of society. When we exercise power over other people, we have a moral obligation to do so in at least a reasonably informed way.”

The most poorly informed people among us are those who seem to be the most dismissive of experts and are demanding the greatest say in matters about which they have exerted almost no effort to educate themselves.

Put another way, when the public claims it has been misled or kept in the dark, experts and policymakers cannot help but ask, “How would you know?”

The British writer C. S. Lewis warned long ago of the danger to democracy when people no longer recognize any difference between political equality and actual equality, in a vivid 1959 essay featuring one of his most famous literary creations, a brilliant and evil demon named Screwtape.

The claim to equality, outside the strictly political field, is made only by those who feel themselves to be in some way inferior.

“I’m as good as you,” Screwtape chortles at the end of his address, “is a useful means for the destruction of democratic societies.”

Most causes of ignorance can be overcome, if people are willing to learn. Nothing, however, can overcome the toxic confluence of arrogance, narcissism, and cynicism that Americans now wear like full suit of armor against the efforts of experts and professionals.

Education, instead of breaking down barriers to continued learning, is teaching young people that their feelings are more important than anything else. “Going to college” is, for many students, just one more exercise in personal self-affirmation.

Every single vote in a democracy is equal to every other, but every single opinion is not, and the sooner American society reestablishes new ground rules for productive engagement between the educated elite and the society they serve, the better.

The authors made these and the following comments in a press release from Ohio State University titled “Both Liberals, Conservatives Can Have Science Bias,” February 9, 2015.